1.2: The Power of Music

- Page ID

- 90675

\( \newcommand{\vecs}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vecd}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash {#1}}} \)

\( \newcommand{\id}{\mathrm{id}}\) \( \newcommand{\Span}{\mathrm{span}}\)

( \newcommand{\kernel}{\mathrm{null}\,}\) \( \newcommand{\range}{\mathrm{range}\,}\)

\( \newcommand{\RealPart}{\mathrm{Re}}\) \( \newcommand{\ImaginaryPart}{\mathrm{Im}}\)

\( \newcommand{\Argument}{\mathrm{Arg}}\) \( \newcommand{\norm}[1]{\| #1 \|}\)

\( \newcommand{\inner}[2]{\langle #1, #2 \rangle}\)

\( \newcommand{\Span}{\mathrm{span}}\)

\( \newcommand{\id}{\mathrm{id}}\)

\( \newcommand{\Span}{\mathrm{span}}\)

\( \newcommand{\kernel}{\mathrm{null}\,}\)

\( \newcommand{\range}{\mathrm{range}\,}\)

\( \newcommand{\RealPart}{\mathrm{Re}}\)

\( \newcommand{\ImaginaryPart}{\mathrm{Im}}\)

\( \newcommand{\Argument}{\mathrm{Arg}}\)

\( \newcommand{\norm}[1]{\| #1 \|}\)

\( \newcommand{\inner}[2]{\langle #1, #2 \rangle}\)

\( \newcommand{\Span}{\mathrm{span}}\) \( \newcommand{\AA}{\unicode[.8,0]{x212B}}\)

\( \newcommand{\vectorA}[1]{\vec{#1}} % arrow\)

\( \newcommand{\vectorAt}[1]{\vec{\text{#1}}} % arrow\)

\( \newcommand{\vectorB}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vectorC}[1]{\textbf{#1}} \)

\( \newcommand{\vectorD}[1]{\overrightarrow{#1}} \)

\( \newcommand{\vectorDt}[1]{\overrightarrow{\text{#1}}} \)

\( \newcommand{\vectE}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash{\mathbf {#1}}}} \)

\( \newcommand{\vecs}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vecd}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash {#1}}} \)

Although we might argue over what is and what is not music, there is no question that music is important. Its significance ranges from the historical to the cultural to the biological. Music has played a role in every documented human society of the past and present. The oldest instrument found to date is an ivory flute created about 43,000 years ago—clear evidence that music is not a recent development. But why did humans start making music? The answers to that question might be discovered by examining the extraordinary effects that making and listening to music has on our brains.

Music, Human Experience, and the Brain

All of our activities are governed by the amazing organ situated inside of our skulls and between our ears: the human brain. And it is clear to religionists and evolutionists alike that there is something distinctly different between humans and other animals. But what is that difference? What makes us capable of complex reason and emotion? What gives us the ability to have an awareness of our own thought processes? It can’t simply be the size of our brains, as the brains of blue whales are much larger than those of humans, yet we don’t credit them with equivalent intelligence. Conversely, gorilla brains are only a little smaller than human brains, and they are not capable of the extreme creative and processing power of humanity. So what is it that makes our brains different?

What makes us human?

Consider just a few of the qualities that are claimed to be unique to humans. We recognize ourselves in the working order of things, and are capable of standing back as a spectator and seeing our part in the greater picture. In other words, we have self-consciousness, and are capable of making choices based upon that information. Scientists use the mirror test (whether or not an animal species recognizes reflections of themselves as self, rather than another animal) to measure self-consciousness. But there are many species of primates that recognize their reflections as self, so that characteristic isn’t unique to humans. We have an appreciation of beauty and of aesthetic things, and are compelled as a species to create art. But there are some elephants who paint surprisingly beautiful imitations of the world around them, and some birds who decorate their nests—does this mean that they possess our same capacity for appreciation of aesthetics?

What about humor? All people possess a sense of humor (though some have less than others) and can appreciate and express humor. Not only does humor require intelligence and understanding of situational variables, but it also requires the ability to see the odd, absurd and ironic. But there are chimpanzees that “laugh” when they are tickled, and if you watch young chimps playing long enough, you will eventually see one pull a prank on another and run away “laughing.”

What about awareness of death? While many creatures exhibit behaviors we could characterize as mourning when they lose a beloved human or fellow animal, humans have elaborate funeral rituals upon death. The ancient Egyptians actually buried people with physical objects so that they would have things with them in the next life. But elephants4 have been observed burying their dead (and the dead of other species) in addition to placing food, fruit, and flowers with their bodies. That sounds a lot like a funeral.

What about awareness of time? Humans experience sequence of events, form memories, and then predict future outcomes, and we have ways of measuring the passing of time in equal intervals (think second hand on a watch). Dogs and other animals certainly don’t have clocks or devices, but they reliably know when it is dinner time. Is this because of biological processes, or do they, too, have some sense of time?

What about love? It is arguably one of the most important motivational forces in a human’s life, but are we alone in this? Animals display behaviors that clearly indicate affection, but do they love each other the way we do? Cats will rub their companions and purr, whales can deliberately save seals from attack, and dogs display extraordinary altruism towards their owners and other creatures. In humans, these behaviors signal the thing we call love. Do animals experience it the way we do?

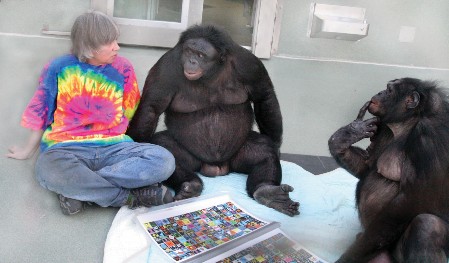

What about language? Humanity is the only species that uses language, although we are clearly not the only species that communicates. So what is different about us? Animals communicate in many ways with one another, and some gorillas have been taught sign-language. Koko the gorilla reportedly understood over 2,000 spoken words and was able to use more than 1,000 signs to convey thoughts and emotions. She was even able to communicate compound ideas by using signs in ways they had not been taught to her. This certainly was a form of communication and language use, although Koko could never learn to speak. In addition, while some animals can understand words, sounds, and tone of voice, they do not comprehend syntax or communicate in complex sentences. Throughout history, human beings have devised hundreds of languages and endless dialects, despite the fact that we are born with no way to verbally communicate, at all. So what is it about our brains that makes them capable of complex language, when the composition of our brains is so similar to chimpanzees and gorillas?

Language and the Human Brain

It comes down to the structure of our brains and to what those structures do. Generally, the human brain can be divided into three regions: the forebrain, midbrain, and hindbrain. This characteristic is absent in most animals. Although the size of the brain itself does not determine complex intelligence, the size of the brain in relationship to the size of the body matters. Humans win the rodeo with the largest brain of all animals in comparison

to the size of their bodies. In addition, the human brain has more neurons in its outermost layer (the cerebral cortex) than do other animals, and the insulation around nerve fibers in the human brain is thicker than that of other animals, enabling more rapid signal transfer between neurons. We literally think better and faster. But it is the structures responsible for language production and comprehension (Broca’s and Wernicke’s

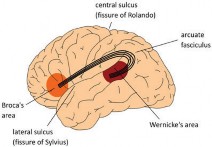

areas) that are unique to human beings. And, interestingly, both of these areas are heavily involved in the processing of music, which brings us to the crux of the matter: human beings are the only animals who employ “music” and “language”. That is what separates us from every other species on the planet. And it seems as though we do these things because we have been endowed with neuroanatomical structures that are unique to us. So what do these two critical brain regions do? And how is music cognition different from language cognition?

Early investigators learned about particular regions of the brain that control speech by observing patients’ limitations and then conducting postmortem exams. A French neurologist named Paul Broca observed a patient who understood language but who was unable to produce more than a few isolated words. When that patient died, Broca conducted a postmortem exam and found a lesion in the man’s forebrain in the frontal lobe. He deduced that this area was associated with the production of speech, and it was termed Broca’s area. Persons with damage to Broca’s area understand heard language and know what they wish to say but are unable to speak. They can’t speak because Broca’s area controls the physical production of speech. Essentially, our brains take in auditory stimuli, then Broca’s area (in conjunction with Wernieke’s area, which we will discuss in a moment) converts the stimuli to neuronal representations that are then translated into the physical motions involved in producing speech sounds. To put this more simply, that area of the brain helps us understand what we hear, formulate articulate thoughts and then convert them into speech.

About ten years later, a neurologist named Carl Wernicke identified a similar, but different, problem in patients who were unable to comprehend language or to construct meaningful sentences, even though they did not experience difficulty in producing articulate words. In postmortem examination, he found lesions at the junction of the parietal, temporal, and occipital lobes. He deduced that this area, now termed Wernicke’s area, had something to do with the understanding of language. Conjunctly, Broca’s and Wernicke’s areas handle the input of sound, conversion of sound to understanding, and utterance of spoken language. And these two areas are distinct to humans. The genuinely fascinating thing is that for many years, these areas were thought to be exclusively involved in the processing of language. But recent researchers have discovered through fMRI (functional magnetic resonance imaging) technology that the two language processing centers are activated during listening to and processing music, even when it contains no text. In other words, your two language centers fire when you are listening to instrumental music and are not processing language. How bizarre is that? Why might that be? How are music and language similar in such a way as to explain this phenomenon?

Connections Between Speech and Music

What two things do you think of most easily when someone asks, “What is music?” Probably variation in pitch (frequency) and rhythm (time), even though those are not the only elements of music. Is there a pitch and a rhythm to speech? Read that question aloud to yourself, and note the fact that not all words are the same pitch. This is because we emphasize more important words and increase pitch when asking a question. Read it again and note the fact that not all of the words are the same speed or length, due to the fact that we vary the rhythm of speech sounds. And not only that: there is a syntax (the orderly arrangement of sounds in a system) to both language and music. They behave similarly in that the arrangement of sounds is predictable and conforms to patterns. And there we have it. Our brains are uniquely constructed for the successful intake, conversion, and execution of language and music. And the reason other animals can’t and don’t make music or speech (some animals make musical sounds, but the construction of these sounds doesn’t conform to syntactical rules, so these sounds aren’t actually music in the way we understand it) is because their brains lack the two areas involved in the processing of orderly sound systems. How crazy is that?

But what does this really mean about the nature of music and speech? It suggests that those are the two primary things that make us human and that distinguish us from all other creatures on the planet. That’s a significant point. But music isn’t only processed in Broca’s and Wernicke’s areas, although speech primarily is.

Before we examine that, however, we need to discuss how the brain is generally structured. The brain is divided into three main parts: the cerebrum, the cerebellum, and the brain stem. The cerebrum is the part that gives the brain its wrinkled appearance. It is divided into a left and right hemisphere separated by the corpus callosum, a bundle of fibers that transmit messages from one side of the brain to the other. The cerebrum performs higher functions like receiving and analyzing sensory input such as touch, sight, and sound, and also processes reasoning, emotion, memory, and fine motor control. Both Broca’s and Wernicke’s areas are situated in the cerebrum. The cerebellum is located under the cerebrum. It primarily coordinates muscle movements, and processes the body’s position in space for purposes of balance. The brainstem is the most evolutionarily primal area of the brain—one that we share with other primates. The brainstem performs primarily autonomous functions—those that don’t involve voluntary thought, like heart rate, breathing, body temperature, digestion, swallowing, coughing, and vomiting. You can see that as you move upward from the brainstem, the functions of the brain become more complex.

Now that we’ve handled some of the less-interesting technical information about the way the brain is structured, let’s go back to the cerebrum, where most complex brain function occurs. If we can arrive at an understanding of the way the cerebrum is divided and what kinds of information are processed in each area, it will help us to understand the differences in the way the brain processes language and music—perhaps the two most significant markers of what it is to be human. As previously mentioned, the cerebrum is divided into a left and right hemisphere that communicate with one another across the corpus callosum. Not all functions of the two hemispheres are shared. In general, the left hemisphere controls the physical motion on the right side of the body and the right hemisphere controls the physical motion on the left side of the body. Also, in general terms, the left hemisphere processes speech, comprehension, arithmetic, and writing. The right hemisphere controls creativity, spatial ability, and artistic and musical skills. This explanation is a bit misleading, however.

If you look down at a brain from the top, you can see it is divided into two distinct hemispheres. But if you look at the brain from the side, you can see that each hemisphere has distinct fissures that divide the brain into chunks, called lobes. Each hemisphere has four lobes. Moving from front to back, they are the frontal, parietal, temporal, and occipital lobes. Each can be divided even further into areas that serve specific functions (like Broca’s and Werneike’s areas). But it is important to understand that no lobe or area of the brain functions in isolation. There are complex networks between the lobes of the brain and between the hemispheres that interact to process information. In that sense, our brains are the most complex computers on the planet! We’ll quickly take a look at what is generally processed in each lobe before circling back to talk about the differences between language and music processing in the brain.

Frontal lobe processing determines personality, behavior, emotions, judgment, planning, problem solving, speech (Broca’s area), fine body movement, intelligence, concentration, and one of the other defining characteristics of human beings: selfawareness. You can see that the frontal lobe (put your hand up to your forehead— that’s where the frontal lobe is) handles most of the things that make you, well… you. This is why traumatic injury to the frontal lobe from head-impact is often absolutely devastating to the individual. You can lose what it is to be you if that area is damaged. The parietal lobe processes senses of touch, pain, and temperature, and interprets signals from vision, hearing, motor input, memory, and spatial perception. It also plays a role in the interpretation of language and words. Moving further back, the temporal lobe handles the understanding of language (Wernicke’s area), memory, hearing, sequencing, and organization. And finally, the occipital lobe interprets visual stimuli, including color, light, and movement. Whew! That was a lot of information about our highly complex human brain.

So let’s go back to examine language processing a little more deeply. First, our ears take in sound waves and translate them into electrical impulses that travel through nerves to different parts of the brain. The first place they go is the auditory cortex in the temporal lobe, where the sound is translated into neuronal representations (basically, your brain’s “image” of the sounds). The neuronal representations are then transmitted to the areas of the brain involved in interpreting them and deciding what to do with them. In the case of speech that is only heard, the auditory cortex and Werneike’s area are primarily involved. In the case of language that is read and interpreted, the visual cortex and Werneike’s area are primarily involved. In the case of speech that is produced, Werneike’s area transmits neuronal representations to Broca’s area, which converts them into spoken language with involvement in the motor cortex. But if language and music are so similar, what is different in the way that the brain processes language and music?

Well, to begin with, language processing is fairly isolated. As we’ve discussed, depending on the type of language activity a person is engaging with, there are a few areas primarily involved in processing the information. In the case of music cognition, however, the brain lights up like a Christmas tree. There is activity all over the place: in both hemispheres, in all four lobes, in the cerebellum, and even in the brain stem. With the advent of fMRI, we can see which areas of the brain light up as a person is engaging with music. As in the case of language, it depends upon the way in which you are engaging with music. But the one thing that is consistent is that no matter how you are engaging—whether you are listening passively, or listening actively (listening and thinking about what you are listening to), whether you are hearing music with or without words, whether you are playing music, reading music, writing and composing music, or improvising music—a unique neural network lights up all across the brain. Normally unrelated areas of the brain work in synchronicity to process music, even when they do not coordinate to process any other type of information. That is pretty crazy! Even the brain stem—the part of the brain that handles automatic and subconscious processes—assists in music cognition.

So here we come to the crux of it. The human brain is an incredibly complicated computer. It handles incomprehensible amounts of information every second, and is more complex than the brains of other animals. There are two primary things that separate us from all other animals on the planet: language and music. Our brains are structured differently than are those of other animals, and it is these specialized structures that allow us to engage in language and music. But while language processing is complex, music cognition is even more complex, involving more brain regions and involving activity in both hemispheres, all lobes, the cerebrum, and the brainstem.

Beyond this, music also activates the limbic system within which emotions and feelings are processed. It is capable of eliciting sympathetic emotional response from listeners even in the absence of words, and our memory systems are intrinsically woven into the brain’s processing of music. This is why music can be used to “bring back” patients with Alzheimer’s, and why you can remember a song even if you haven’t heard it for 40 years. Suddenly, you’ll find yourself singing along and wondering how in the world you still have that information in there—but it’s in there because the retrieval pathways were laid down in more than one way. You won’t remember a poem or a story, or any other information, the way you remember music. For this reason, it is a profound educational tool: information can be entrained quickly and permanently when connected to music. Think about how many things were taught to you as a child through song, beginning with learning your letters! The A-B-C song is the most commonly taught song in the U.S. (and many other places have their own version) because it is such an effective way of teaching children to remember otherwise unfamiliar and disconnected information (the sound of each letter and the order in which they occur in the alphabet). If it is such a profound educational tool because of the effects on memory and retention, how else can music be used?